Super-sensitive electronic skin for robots and prosthetics

Robots and prosthetic devices may soon have a sense of touch equivalent to, or better than, human skin, thanks to an artificial nervous system developed at the National University of Singapore (NUS).

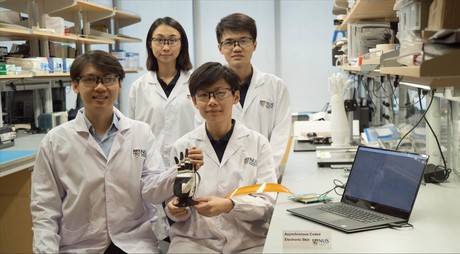

Asynchronous Coded Electronic Skin (ACES) has ultrahigh responsiveness and robustness to damage, and can be paired with any kind of sensor skin layers to function effectively as an electronic skin. It was developed by Assistant Professor Benjamin Tee and his team from NUS Materials Science and Engineering, and has been described in the journal Science Robotics.

“Humans use our sense of touch to accomplish almost every daily task, such as picking up a cup of coffee or making a handshake. Without it, we will even lose our sense of balance when walking,” said Asst Prof Tee, who has been working on electronic skin technologies for over a decade. “Similarly, robots need to have a sense of touch in order to interact better with humans, but robots today still cannot feel objects very well.”

Drawing inspiration from the human sensory nervous system, the NUS team spent a year and a half developing a sensor system that could potentially perform better. While the ACES electronic nervous system detects signals like the human sensor nervous system, it is unlike existing electronic skins which have interlinked wiring systems that can make them sensitive to damage and difficult to scale up.

“The human sensory nervous system is extremely efficient, and it works all the time to the extent that we often take it for granted,” Asst Prof Tee said. “It is also very robust to damage. Our sense of touch, for example, does not get affected when we suffer a cut. If we can mimic how our biological system works and make it even better, we can bring about tremendous advancements in the field of robotics where electronic skins are predominantly applied.”

ACES can detect touch more than 1000 times faster than the human sensory nervous system, according to the researchers. For example, it is capable of differentiating physical contact between different sensors in less than 60 ns — said to be the fastest ever achieved for an electronic skin technology — even with large numbers of sensors. ACES-enabled skin can also identify the shape, texture and hardness of objects within 10 ms — 10 times faster than the blinking of an eye. This is enabled by the high fidelity and capture speed of the ACES system.

The ACES platform can be designed to achieve high robustness to physical damage — an important property for electronic skins because they come into frequent physical contact with the environment. Unlike the current system used to interconnect sensors in existing electronic skins, all the sensors in ACES can be connected to a common electrical conductor with each sensor operating independently. This allows ACES-enabled electronic skins to continue functioning as long as there is one connection between the sensor and the conductor, making them less vulnerable to damage.

The researchers say that ACES has a simple wiring system and remarkable responsiveness, even with increasing numbers of sensors — characteristics that will facilitate the scale-up of intelligent electronic skins for AI applications in robots, prosthetic devices and other human machine interfaces. As noted by Asst Prof Tee, “Scalability is a critical consideration as big pieces of high-performing electronic skins are required to cover the relatively large surface areas of robots and prosthetic devices.

“ACES can be easily paired with any kind of sensor skin layers — for example, those designed to sense temperatures and humidity — to create high-performance ACES-enabled electronic skin with an exceptional sense of touch that can be used for a wide range of purposes,” he added. For instance, pairing ACES with the transparent, self-healing and water-resistant sensor skin layer developed by Asst Prof Tee’s team creates an electronic skin that can self-repair, like the human skin. This type of electronic skin can be used to develop more realistic prosthetic limbs that will help disabled individuals restore their sense of touch.

Other potential applications include developing more intelligent robots that can perform disaster recovery tasks or take over mundane operations such as packing of items in warehouses. The NUS team is therefore looking to further apply the ACES platform on advanced robots and prosthetic devices in the next phase of their research.

Please follow us and share on Twitter and Facebook. You can also subscribe for FREE to our weekly newsletter and bimonthly magazine.

Battery-free smart home sensors developed using metal tags

Researchers at Georgia Tech have developed battery-free ultrasonic sensors that use passive tags...

Ultrasound wristband enables precise robotic hand control

By moving their hands and fingers, users can direct a robot to play piano or shoot a basketball,...

Fingertip bandage brings texture to touchscreens

Researchers have developed a haptic device that enables wearers to feel virtual textures and...