Satellite launched with AI on board

As ubiquitous as artificial intelligence (AI) has become in modern life, the technology hasn’t yet found its way into orbit — not until recently, that is.

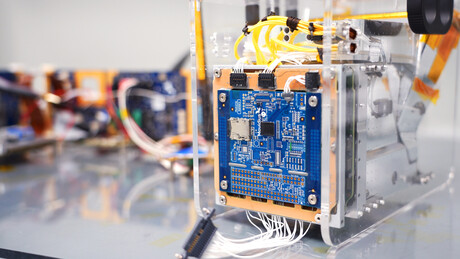

On 2 September, an experimental satellite about the size of a cereal box was ejected from a rocket’s dispenser along with 45 other similarly small satellites. The satellite, named PhiSat-1, contains a new hyperspectral-thermal camera and onboard AI processing, thanks to an Intel Movidius Myriad 2 vision processing unit (VPU) — the same chip inside many smart cameras and even a $99 selfie drone here on Earth.

PhiSat-1 is actually one of a pair of satellites on a mission to monitor polar ice and soil moisture, while also testing intersatellite communication systems in order to create a future network of federated satellites. Myriad 2, meanwhile, is focused on handling the large amount of data generated by high-fidelity cameras like the one on PhiSat-1.

“The capability that sensors have to produce data increases by a factor of 100 every generation, while our capabilities to download data are increasing, but only by a factor of three, four, five per generation,” said Gianluca Furano, Data Systems and Onboard Computing Lead at the European Space Agency (ESA), which led the collaborative effort behind PhiSat-1.

At the same time, about two-thirds of our planet’s surface is covered in clouds at any given time. That means a whole lot of useless images of clouds are typically captured, saved, sent over precious down-link bandwidth to Earth, saved again, and reviewed by a scientist (or an algorithm) on a computer hours or days later — only to be deleted.

“Artificial intelligence at the edge came to rescue us, the cavalry in the Western movie,” Furano said. The idea the team rallied around was to use onboard processing to identify and discard cloudy images — thus saving about 30% of bandwidth.

Irish start-up company Ubotica built and tested PhiSat-1’s AI technology, working in close partnership with camera developer cosine, as well as the University of Pisa and Sinergise, to create the complete solution. As explained by Ubotica’s Chief Technology Officer, Aubrey Dunne, “The Myriad was absolutely designed from the ground up to have an impressive compute capability but in a very low-power envelope, and that really suits space applications.”

The Myriad 2, however, was not intended for orbit. Spacecraft computers typically use very specialised “radiation-hardened” chips that can be “up to two decades behind state-of-the-art commercial technology”, Dunne said. And AI has so far not been on the menu.

Dunne and the Ubotica team performed ‘radiation characterisation’, putting the Myriad chip through a series of tests to figure out how to handle any resulting errors or wear and tear. Furano noted that the ESA “had never tested a chip of this complexity for radiation”, so they “had to write the handbook on how to perform a comprehensive test and characterisation for this chip from scratch”.

The Myriad 2 passed the tests in off-the-shelf form, with no modifications needed. The low-power, high-performance computer vision chip was ready to venture beyond Earth’s atmosphere. But then came another challenge.

Typically, AI algorithms are built, or ‘trained’, using large quantities of data to ‘learn’ — in this case, what is and isn’t a cloud. But given the camera was so new, said Furano, “we didn’t have any data. We had to train our application on synthetic data extracted from existing missions”.

All this system and software integration and testing, with the involvement of half a dozen different organisations across Europe, took four months to complete. As far as spacecraft development goes, the timeline was “a miracle”, according to Furano.

The satellite’s launch from French Guiana went fast and flawlessly. For the initial verification, the satellite saved all images and recorded its AI cloud detection decision for each, so the team on the ground could verify that its implanted brain was behaving as expected.

The ESA soon confirmed “the first-ever hardware-accelerated AI inference of Earth observation images on an in-orbit satellite”. By only sending useful pixels, the agency said, the satellite will “improve bandwidth utilisation and significantly reduce aggregated downlink costs” — not to mention saving scientists time on the ground.

Looking forward, the applications for low-cost, AI-enhanced satellites are innumerable — particularly when you add the ability to run multiple applications. As explained by Jonathan Byrne, Head of the Intel Movidius technology office, “Rather than having dedicated hardware in a satellite that does one thing, it’s possible to switch networks in and out.” Dunne calls this “satellite-as-a-service”.

When flying over areas prone to wildfire, a satellite can spot fires and notify local responders in minutes rather than hours. Over oceans, which are typically ignored, a satellite can spot rogue ships or environmental accidents. Over forests and farms, a satellite can track soil moisture and the growth of crops. Over ice, it can track thickness and melting ponds to help monitor climate change.

ESA and Ubotica are now working together on PhiSat-2, which will carry another Myriad 2 into orbit. PhiSat-2 will be capable of running AI apps that can be installed, validated and operated on the spacecraft during their flight using a simple user interface. According to Intel, the potential impact is unquestionable.

Please follow us and share on Twitter and Facebook. You can also subscribe for FREE to our weekly newsletter and bimonthly magazine.

Optimising OEM data for smarter electronics systems

Rockwell Automation's FactoryTalk Optix delivers on-machine monitoring, providing clean,...

Wireless innovation transmits vast amounts of data

Researchers have developed a machine-learning system that curves ultrahigh-frequency signals...

Ricoh chooses u-blox module for long-lasting GNSS performance

The Ricoh Theta X camera incorporates the ZOE-M8B GNSS module from u-blox, allowing users to...