AI-powered wearable turns gestures into robot commands

Engineers at the University of California San Diego have developed a next-generation wearable system that enables people to control machines using everyday gestures, even while running, riding in a car or floating on turbulent ocean waves.

The system, published in Nature Sensors, combines stretchable electronics with artificial intelligence to overcome a longstanding challenge in wearable technology: reliable recognition of gesture signals in real-world environments.

Wearable technologies with gesture sensors work fine when a user is sitting still, but the signals start to fall apart under excessive motion noise, explained study co-first author Xiangjun Chen, a postdoctoral researcher at the UC San Diego Jacobs School of Engineering. This limits their practicality in daily life. “Our system overcomes this limitation. By integrating AI to clean noisy sensor data in real time, the technology enables everyday gestures to reliably control machines even in highly dynamic environments,” Chen said.

The technology could enable patients in rehabilitation or individuals with limited mobility, for example, to use natural gestures to control robotic aids without relying on fine motor skills. Industrial workers and first responders could potentially use the technology for hands-free control of tools and robots in high-motion or hazardous environments. It could even enable divers and remote operators to command underwater robots despite turbulent conditions. In consumer devices, the system could make gesture-based controls more reliable in everyday settings.

To the researchers’ knowledge, this is the first wearable human–machine interface that works reliably across a wide range of motion disturbances. As a result, it can work with the way people actually move.

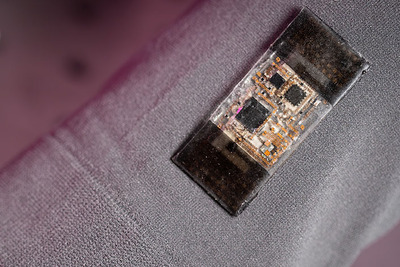

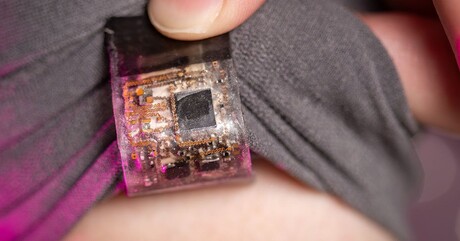

The device is a soft electronic patch that is glued onto a cloth armband. It integrates motion and muscle sensors, a Bluetooth microcontroller and a stretchable battery into a compact, multilayered system. The system was trained from a composite dataset of real gestures and conditions, from running and shaking to the movement of ocean waves. Signals from the arm are captured and processed by a customised deep-learning framework that strips away interference, interprets the gesture and transmits a command to control a machine — such as a robotic arm — in real time.

“This advancement brings us closer to intuitive and robust human–machine interfaces that can be deployed in daily life,” Chen said.

The system was tested in multiple dynamic conditions. Subjects used the device to control a robotic arm while running, exposed to high-frequency vibrations and under a combination of disturbances. The device was also validated under simulated ocean conditions using the Scripps Ocean-Atmosphere Research Simulator at UC San Diego’s Scripps Institution of Oceanography. In all cases, the system delivered accurate, low-latency performance.

“This work establishes a new method for noise tolerance in wearable sensors,” Chen said. “It paves the way for next-generation wearable systems that are not only stretchable and wireless, but also capable of learning from complex environments and individual users.”

Ultra-thin fibre microphone monitors power grid systems

Immune to extreme heat and high voltage, the new device could listen for problems inside...

Graphene-based solar cells power temperature sensors

Researchers have demonstrated the ultra-low-power temperature sensors powered by graphene-based...

3D-printed diamond device powers medical implants

Researchers from RMIT University have developed a 3D-printed diamond–titanium device that...